- Transfer Learning 101: Techniques & Frameworks

- 1.1 Why Transfer Learning Matters: From Cats to Cancer Detection

- 1.2 What is Transfer Learning?

- 1.3 Transfer Learning versus Fine-Tuning

- 1.4 Transfer Learning Strategies

- 1.5 The Layers of a Neural Network (And What They Learn)

- 1.6 Peeking Under the Hood: What Happens When You ‘Unfreeze’ a Neural Net

- 1.7 How to Choose Which Layers to Unfreeze

- 1.8 Different Flavors of Transfer Learning

- 1. Pretrained Feature Extraction (Fastest & Simplest)

- 2. Fine-Tuning Pretrained Models (Most Common)

- 3. Head Swapping (Custom Top Layers)

- 4. Domain-Adaptive Transfer Learning

- 5. Progressive Layer Unfreezing (Discriminative Fine-Tuning)

- 6. Knowledge Distillation (KD)

- 7. Multi-Task Transfer Learning

- 8. Self-Supervised Pretraining + Fine-Tuning

- 9. Zero-Shot / Few-Shot Transfer (Foundation Models)

- 10. Cross-Modal Transfer Learning

- Transfer Learning Cheat Sheet (with Medical Imaging Examples)

- 1.9 Common Pitfalls and How to Troubleshoot

- 1.10 Advanced Transfer Learning Techniques

- 1.11 Final summary / quick decision guide

1.1 Why Transfer Learning Matters: From Cats to Cancer Detection

At first glance, teaching a computer to recognize cats on the internet and detecting cancer in an X-ray might seem worlds apart. But in AI, they are more closely connected than you think.

This connection comes from transfer learning — the idea that a model trained on one task (like spotting cats, dogs, or buses in millions of everyday photos) can be repurposed for something entirely different, like identifying tumours in medical scans.

Why does this matter?

- Data is scarce in the real world. Doctors don’t have millions of perfectly labelled cancer images to train a model from scratch.

- Compute is expensive. Most organizations can’t afford to train billion-parameter models from zero.

- Time is critical. Transfer learning allows researchers and companies to move from idea to working solution faster.

By reusing knowledge from one domain (cats and buses) and adapting it to another (cancer detection), transfer learning levels the playing field. It makes cutting-edge AI accessible not just to big tech labs, but to healthcare institutions, educators, startups, and researchers across the globe.

1.2 What is Transfer Learning?

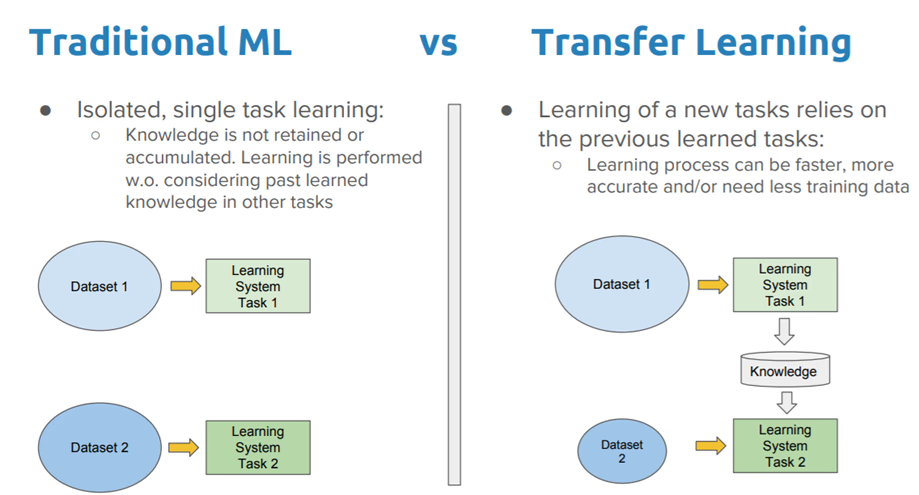

Think about learning to ride a bicycle. The first time was tricky — balancing, steering, not toppling over. But once you mastered it, riding a motorbike wasn’t an entirely new challenge. You didn’t have to relearn balance or steering. You only had to pick up the new skills — how to use the clutch, gears, and throttle.

That’s exactly how transfer learning in machine learning works.

In the AI world, the “bicycle” might be a model trained on ImageNet, a giant dataset with over 14 million images of everyday objects like cats, buses, and bananas. This model already knows how to detect shapes, edges, and colors. When you face a new challenge — say diagnosing plant diseases from leaf photos or detecting fractures in X-rays — you don’t start from zero. You transfer what the model already knows and just fine-tune it for your specific problem.

In short, transfer learning works because knowledge is reusable. Once a model has learned general patterns, those patterns can often be applied to new but related tasks.

This approach solves one of the biggest challenges in AI: how to achieve high accuracy when you don’t have much data of your own. Training a model from scratch with limited data is like trying to teach someone a new language using only a handful of words — frustrating and inaccurate. Transfer learning sidesteps this by leveraging prior knowledge, making AI accessible even in areas where data is scarce, from medical imaging to agriculture.

Before diving in, though, data scientists ask three key questions:

- What knowledge can I transfer? (For example, should I reuse all the features the model learned, or just the early ones like “edges and colors”?)

- When should I transfer — and when shouldn’t I? (Not all knowledge transfers well; sometimes it can even hurt performance, a problem known as negative transfer.)

- How do I transfer this knowledge to my specific domain? (Do I freeze most of the model and only train the last layer, or do I fine-tune more deeply?)

Answering these questions helps decide the best strategy — whether to lightly reuse what’s learned, or to dig deeper and retrain more of the model.

1.3 Transfer Learning versus Fine-Tuning

These two terms often get mixed up, but they’re not quite the same. Think of them as cousins in the world of machine learning.

- Transfer Learning means taking a model that has already learned from one problem and adapting it to a different but related problem. For example, a model trained on millions of animal photos can be repurposed to identify plant species, because both tasks involve recognizing shapes and patterns in images.

- Fine-Tuning is more like polishing. Here, you take a pre-trained model and continue training it — not for a completely new task, but for the same or a very similar task so that it performs better. Imagine you built a general object detection model using a massive dataset like ImageNet or COCO. If your actual goal is to detect cars more accurately, you’d fine-tune that model using a smaller, car-specific dataset. The base knowledge stays the same, but the model sharpens its focus.

So, to put it simply:

- Transfer Learning → apply knowledge to a new problem.

- Fine-Tuning → improve performance on the original or closely related problem.

Both approaches save time and resources compared to training from scratch, but they shine in different situations.

1.4 Transfer Learning Strategies

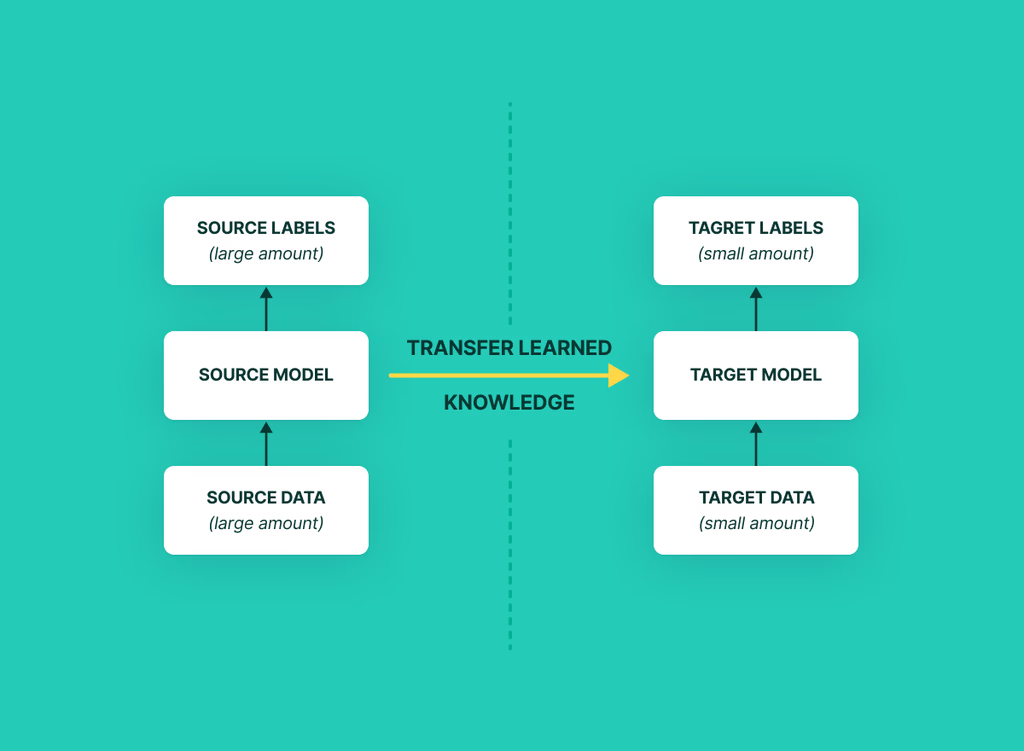

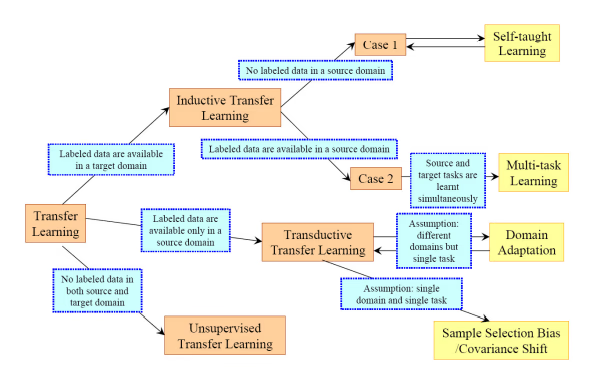

Not all transfer learning is created equal. The way you transfer knowledge depends on the relationship between your starting point (the source domain) and your final goal (the target domain). At a high level, there are three main types of transfer learning strategies:

Inductive Transfer Learning

Here, the source and target data come from the same world, but the tasks are different.

Think of it this way: you already know how to solve math problems, but now you’re asked to solve physics problems. The numbers and formulas look familiar, but the purpose has shifted. In machine learning, this could mean using a model trained on general medical images and adapting it to detect tumours. The data (medical scans) is the same, but the task changes.

Transductive Transfer Learning

This is used when the tasks are the same, but the domains are different.

For example, imagine you trained a spam filter using English emails (source domain) and now want to use it for Spanish emails (target domain). The task — spam detection — is the same, but the data comes from a different domain. Usually, there’s plenty of labelled data in the source, while the target data is mostly unlabelled. The model transfers what it knows about spam and adapts it to the new language.

Unsupervised Transfer Learning

This one is trickier. It’s like Inductive Transfer Learning, but without labelled answers. Both the source and target tasks rely on unlabelled data.

Imagine listening to music without lyrics — you can still pick up rhythm and mood, but no one tells you explicitly “this is jazz” or “this is pop.” Similarly, the model transfers what it learned from one unlabelled dataset to help make sense of another.

These strategies set the stage, but to truly grasp how transfer learning works, we’ll need to peek under the hood of neural networks and understand what each layer of the model actually learns. That’s where we’re heading next.

1.5 The Layers of a Neural Network (And What They Learn)

At their core, most machine learning models are built on a neural network — a mathematical framework loosely inspired by the way our brains process information. You can think of it as a feature factory. Each layer in the network transforms the raw input into something more meaningful, step by step, until the model can make a final prediction.

Let’s break it down using an image recognition example:

| Layer Type | What It Learns (Image Example) | Transferability |

| Bottom / Early Layers (Stem) | The “first look.” Detects very simple features like edges, corners, and textures. | 🔁 Highly reusable |

| Middle Layers | Spots shapes, object parts, and patterns (like “ears” or “wheels”). | 🔁 Somewhat reusable |

| Top / Late Layers (Classifier block) | Recognizes full objects (cats, dogs, buses). | ❌ Usually not reusable |

| Head | Makes the final decision — e.g., “This is a Labrador” or “This is a mango leaf.” | ❌ Always replaced |

Pipeline view:

Input → [Bottom Layers] → [Middle Layers] → [Top Layers] → [Head]

In transfer learning, you’ll often hear the word Backbone. This simply means all the reusable layers of the model (bottom + middle + top). The Head is the replaceable part — the task-specific layer you swap out depending on whether you want to classify dog breeds, detect plant diseases, or recognize handwritten numbers.

- Backbone = pre-trained feature extractor

- Head = task-specific adaptor

This layered structure is why transfer learning works so well. The early and middle layers capture general knowledge about the world (edges, shapes, textures), which can be reused across many tasks. Only the very top and the head need to be retrained for your new problem.

1.6 Peeking Under the Hood: What Happens When You ‘Unfreeze’ a Neural Net

When machine learning folks say “unfreezing a layer,” it’s not about ice. It means we allow that part of the model to keep learning — updating its weights during training — instead of leaving it “frozen” (unchanged).

Think of it like a music band:

- The backbone musicians already know the basic tune.

- The head is the lead singer who changes depending on the song.

- When you “unfreeze” players, you let them improvise — some, or all, depending on how much adaptation you need.

Here’s what happens in practice:

1. Unfreeze only the head (Feature Extraction)

- What it means: You keep the backbone fixed and only train the final task-specific layer.

- Pros: Fast, efficient, and safe from overfitting.

- Cons: Limited adaptability. If your new task is very different, accuracy may plateau.

2. Unfreeze just the top block(s) (Partial Fine-Tuning)

- What it means: The upper layers adapt while the lower layers stay frozen.

- Pros: A good balance — adapts to new data while preserving general features like edges and textures.

- Cons: More compute required; needs careful tuning of learning rates.

3. Unfreeze many or all layers (Full Fine-Tuning)

- What it means: The entire network is free to learn from your new data.

- Pros: Maximum flexibility — best when your target domain is very different or when you have lots of labeled data.

- Cons: Risky. The model might “forget” its original knowledge (catastrophic forgetting) or overfit. Also, it’s computationally expensive.

4. Unfreeze middle layers but keep bottom frozen

- What it means: You assume low-level features (edges, textures) are universal, but mid-level features (shapes, parts) need tweaking.

- When useful: For tasks where raw visuals remain the same but higher-order features differ (e.g., industrial textures vs. natural ones).

Practical Playbook (How Experts Actually Do It)

A. Two-Stage Approach (default choice) – balances speed, stability, and accuracy

- Train the head only, while keeping the backbone frozen. Use a relatively higher learning rate (LR).

- Then unfreeze the last block or last few layers and fine-tune with a much smaller LR (10–100× smaller).

B. Progressive Unfreezing (gradual approach) – Great for steady improvements when you have more time

Unfreeze one block at a time:

- First, unfreeze the top block → train.

- Then, unfreeze more → train again.

C. Discriminative Learning Rates

Not all layers learn at the same pace. Think of it as adjusting the “volume knobs” differently for each part of the network.

- Head: highest LR (fast learning).

- Top pretrained layers: low LR.

- Bottom pretrained layers: very low LR or frozen.

Why it matters: Unfreezing layers wisely is the secret to avoiding wasted compute, poor accuracy, or catastrophic forgetting. It’s one of the most practical skills in applying transfer learning effectively.

1.7 How to Choose Which Layers to Unfreeze

Now that we know what “unfreezing” means, the next question is: how do you decide which layers to let learn again? There’s no one-size-fits-all answer, but data scientists use a few simple heuristics (rules of thumb).

Rule 1: Look at Your Dataset

- Very small dataset + similar domain (e.g., cats → dogs): Train the head only, or maybe the last block.

- Medium dataset or slightly different domain (e.g., natural photos → medical scans): Unfreeze 2–4 blocks.

- Large dataset or big domain shift (e.g., natural photos → satellite images): Full fine-tuning works best.

Rule 2: Consider Your Compute Budget

- Limited resources (laptop/GPU time) → Keep most layers frozen.

- Plenty of compute (cloud clusters) → You can fine-tune more deeply, with careful learning rates.

Rule 3: Probe Before You Commit

- Train only the head (linear probe).

- If accuracy plateaus below what you need, gradually unfreeze more layers.

This way you don’t waste compute on full fine-tuning when a lighter touch would work.

Best Practice Recipes (Vision Example)

Here’s how experts typically apply these rules:

Recipe A — Small Dataset (~10k images)

- Freeze all layers, train head only (Learning Rate = 1e-3).

- Unfreeze the last block (e.g., final ResNet or ViT stage), with a much smaller LR (1e-5).

- Stop when validation accuracy stops improving.

Recipe B — Medium Dataset (~50k images)

- Train head only (LR = 1e-4).

- Unfreeze 2–4 blocks, using discriminative learning rates:

- Head: 1e-4

- Top blocks: 1e-5

- Lower blocks: 1e-6

- Add data augmentations and regularization to avoid overfitting.

Recipe C — Large Dataset or Big Domain Shift

- Full fine-tuning with a very small LR (1e-6).

- Apply strong regularization (weight decay, dropout).

- If possible, pretrain on unlabeled data first (self-supervised learning).

A Quick Note on Learning Rates (LR)

The learning rate is like the “pace of learning”:

- Large LR (e.g., 1e-3) → Learns fast but risks skipping details, like skimming a book too quickly.

- Small LR (e.g., 1e-6) → Learns slowly but with more accuracy, like carefully studying each page.

That’s why experts often use different learning rates for different parts of the model — letting the new head learn fast, while the older pretrained layers relearn more cautiously.

Why this matters: Choosing what to unfreeze (and at what pace) is the art of transfer learning. Done wisely, it saves compute, boosts accuracy, and prevents your model from “forgetting” what it already knows.

1.8 Different Flavors of Transfer Learning

Transfer learning isn’t a single recipe — it’s more like an ice cream shop with many flavors. Which one you choose depends on how much data you have, how much computing power you can spend, and how close your task is to the original problem the model was trained on.

To make sense of it, let’s group them into three buckets:

1. Pretrained Feature Extraction (Fastest & Simplest)

- What it is: Freeze the pretrained model, use only its learned features (edges, shapes, textures).

- When to use: Small dataset + limited compute.

- Examples:

- Images → ResNet, EfficientNet, Vision Transformers (ViT).

- Text → BioBERT for medical articles.

- Analogy: Like borrowing someone’s notes instead of rewriting the whole textbook.

2. Fine-Tuning Pretrained Models (Most Common)

- Partial Fine-Tuning: Unfreeze only the last few layers to adapt to your dataset.

- Full Fine-Tuning: Retrain all layers with a small learning rate.

- When to use: Medium to large datasets, or when your data differs slightly from the source.

- Analogy: Editing a borrowed essay — sometimes you just tweak the conclusion, sometimes you rewrite the whole thing.

3. Head Swapping (Custom Top Layers)

- What it is: Replace the top classification layers with your own architecture (maybe an RNN, Transformer head, or custom classifier).

- When useful: When your labels don’t match the pretrained ones (e.g., cancer classes vs. ImageNet’s “cat/dog/bus”).

- Example: EfficientNet backbone + custom ViT block for medical image classification.

- Analogy: Keeping the same camera body but swapping in a new lens for different photos.

4. Domain-Adaptive Transfer Learning

- When: Source and target datasets are very different.

- Example: Model trained on ImageNet photos, adapted to skin lesion scans.

- How: Freeze lower layers, retrain middle/top layers, sometimes add adversarial tricks to reduce mismatch.

- Analogy: Learning to cook Italian food and then adapting to Japanese — the basics (boiling, frying) stay, but the recipes shift.

5. Progressive Layer Unfreezing (Discriminative Fine-Tuning)

- What it is: Gradually unfreeze layers, starting with the head, then the top layers, then deeper ones.

- Why: Prevents catastrophic forgetting and gives stable improvements.

- Analogy: Like loosening the strings of a guitar one by one instead of all at once.

6. Knowledge Distillation (KD)

- What it is: A large “teacher” model trains a smaller “student” model.

- Example: EfficientNetB7 → EfficientNetB0 for mobile health apps.

- Why: Smaller, faster models are easier to deploy but still accurate.

- Analogy: Like a master chef teaching an apprentice, who learns the same recipe but cooks it faster.

7. Multi-Task Transfer Learning

- What it is: One backbone → multiple related tasks.

- Example: Same EfficientNet model used for skin cancer detection

- Task 1 → classify lesion type.

- Task 2 → predict benign vs malignant.

- Task 3 → segment lesion boundaries.

- Why it works: Tasks reinforce each other and improve generalization.

- Analogy: Learning both piano and guitar makes you a better musician overall.

8. Self-Supervised Pretraining + Fine-Tuning

- What it is: Train on unlabeled data first (using clever tricks like MAE, SimCLR), then fine-tune on your small labeled dataset.

- Example: Pretrain on millions of unlabeled medical images, then fine-tune for cancer detection.

- Why: Most real-world data is unlabeled — this makes use of it.

- Analogy: Learning a language by watching movies before taking grammar lessons.

9. Zero-Shot / Few-Shot Transfer (Foundation Models)

- What it is: Use giant models like CLIP, SAM, BioViL, or PaLI that already understand language + images.

- Zero-Shot: Model predicts without retraining.

- Few-Shot: Model adapts with just a handful of examples.

- Example: SAM (Segment Anything Model) can outline objects without task-specific retraining.

- Analogy: Like a genius polyglot who can pick up new languages almost instantly.

10. Cross-Modal Transfer Learning

- What it is: Transfer knowledge across data types (text ↔ images ↔ audio).

- Example: Use BioBERT (text embeddings from medical reports) + EfficientNet (image features from scans) together for diagnosis.

- Why: Sometimes combining “voices” from different data sources gives a clearer picture.

- Analogy: Reading subtitles while watching a foreign movie — two sources make understanding easier.

Bottom line: Transfer learning has many flavors — from the quick-and-easy “feature extraction” to cutting-edge “foundation models” that work across modalities. The trick is to pick the flavor that best matches your dataset, compute budget, and problem.

Transfer Learning Cheat Sheet (with Medical Imaging Examples)

Here’s a quick reference to help you see which transfer learning technique fits which scenario. Think of it as a menu for solving real-world problems, especially in medical AI:

| Technique | How It Works | Best Used When… | Medical Use Case Example | One-Line Takeaway |

| Feature Extraction | Freeze the pretrained model, use it as a feature extractor. | Small dataset, low compute. | EfficientNetB0 → HAM10000 (skin lesion classification). | Quick & safe when data is limited. |

| Fine-Tuning | Unfreeze the top layers and retrain partially. | Medium dataset, slight domain shift. | ResNet on chest X-rays. | A balanced middle ground. |

| Full Fine-Tuning | Unfreeze all layers, retrain everything. | Large dataset, major domain shift. | Full ViT on diabetic retinopathy scans. | Maximum flexibility, but costly. |

| Domain-Adaptive TL | Adapt model trained on related domain. | Dataset ≠ ImageNet (completely different). | BioViT → HAM10000. | Teaches the model to “speak a new language.” |

| Adapter-Based TL | Keep backbone frozen, add small “adapter” modules. | Low compute budget. | LoRA with Vision Transformer. | Efficient when hardware is limited. |

| Prompt-Based TL | Use prompts instead of retraining. | Using large foundation models. | BioGPT for diagnostics. | Ask, don’t train. |

| Self-Supervised TL | Pretrain on unlabeled data → fine-tune on few labels. | Few labeled samples, lots of unlabeled. | SimCLR or DINO on radiographs. | Learn first, label later. |

| Zero/Few-Shot TL | Model works with little to no new training. | Extremely small datasets. | GPT-4V on unseen medical tasks. | Great when labels are scarce. |

| Cross-Modal TL | Combine features across data types (text, image, audio). | Multi-source input. | MedCLIP (X-rays + reports). | Two sources are better than one. |

| Multi-Task TL | One model learns multiple related tasks. | Data is scarce, multitask improves learning. | Lesion classification + segmentation. | Sharing tasks makes models smarter. |

1.9 Common Pitfalls and How to Troubleshoot

Transfer learning saves time and data, but it isn’t always smooth sailing. Just like any good recipe, things can go wrong if you don’t watch the details. Here are some common problems people run into — and how to fix them.

| Symptom | What’s Probably Happening | How to Fix It |

| Training loss keeps dropping, but validation accuracy doesn’t improve. | The model is overfitting — memorizing the training set but not generalizing. | Add regularization, use dropout, or freeze more layers. |

| Accuracy suddenly drops after you unfreeze layers. | Learning rate is too high for the pretrained layers. | Lower the LR for those layers; keep head LR higher than backbone LR. |

| Model acts unstable with small batch sizes. | Batch Normalization stats are blowing up. | Freeze BN layers or switch to GroupNorm/LayerNorm. |

| Model “forgets” useful features it had before. | Catastrophic forgetting — overwriting pretrained knowledge. | Try progressive unfreezing or lower LR. |

| Training only the head doesn’t improve performance. | Too much domain shift between source and target data. | Unfreeze more layers so the model can adapt. |

1.10 Advanced Transfer Learning Techniques

If you’ve mastered the basics, there are newer and smarter tricks that can make transfer learning even more powerful. These techniques are especially useful when working with limited compute, scarce labeled data, or highly specialized tasks (like medical imaging).

Adapters (e.g., LoRA)

- What it is: Keep the big pretrained model frozen, but slip in tiny trainable modules between its layers.

- Why it matters: Instead of retraining the whole giant model, you only train the small “adapters.”

- Benefit: Super fast, memory-efficient, and perfect when resources are tight.

- Analogy: Like adding plug-in attachments to a machine instead of rebuilding the whole engine.

Discriminative Learning Rates

- What it is: Use different learning rates for different parts of the model.

- Head → higher LR (learns faster).

- Backbone → lower LR (learns slower, keeps old knowledge).

- Why it matters: Prevents the model from “forgetting” what it already knows while still adapting to the new task.

- Analogy: Teaching a class where beginners get fast-paced lessons, while experts review at a slower pace.

Domain-Adaptive Pretraining

- What it is: Before fine-tuning, do self-supervised pretraining on large amounts of unlabeled data from your domain.

- Why it matters: In fields like medical imaging, where labeled data is scarce, this gives the model a strong head start.

- Example: Pretraining on thousands of unlabeled X-rays, then fine-tuning on a smaller labeled set for disease detection.

Layer-Wise LR Scheduling

- What it is: Combine a learning rate schedule (like cosine decay) with different rates for each layer group.

- Why it matters: Gives finer control, making training smoother and less likely to “blow up.”

- Analogy: Like adjusting the volume of each instrument in an orchestra at different times — ensures harmony during training.

1.11 Final summary / quick decision guide

After all the theory, here’s a simple guide you can use to decide which transfer learning strategy to try:

| Data Size | Domain Shift | Compute Budget | Best Strategy |

| Small | Low | Low | Train only the head (fast, efficient). |

| Small | High | Medium | Progressive unfreezing + small learning rate. |

| Medium | Any | Medium | Partial fine-tuning (2–4 blocks). |

| Large | Any | High | Full fine-tuning. |

| Any | Any | Low | Adapter-based methods (LoRA). |

| Any – Unlabeled data only | Any | Medium | Self-supervised pretraining → fine-tune. |

Transfer learning is, at its heart, the art of reusing what’s already been learned — saving time, compute, and data while often achieving better results. From simple feature extraction to advanced techniques like adapters, self-supervised learning, or foundation models, the goal remains the same: build smarter systems with less effort. The cheat sheet above gives you a practical compass — whether you’re working with small datasets, medical imaging, or large-scale domain shifts.

But transfer learning isn’t just for images. In fact, one of its most powerful playgrounds has been language. Just as models trained on millions of pictures can be adapted to detect diseases, models trained on billions of words can be adapted to summarize, translate, or even diagnose through medical text. In the next chapter — Transfer Learning for NLP — we’ll dive into how techniques like word embeddings, transformers, and fine-tuned language models are reshaping the way machines understand human language.

Want to learn more about everyday use of AI?

Discover more from Debabrata Pruseth

Subscribe to get the latest posts sent to your email.