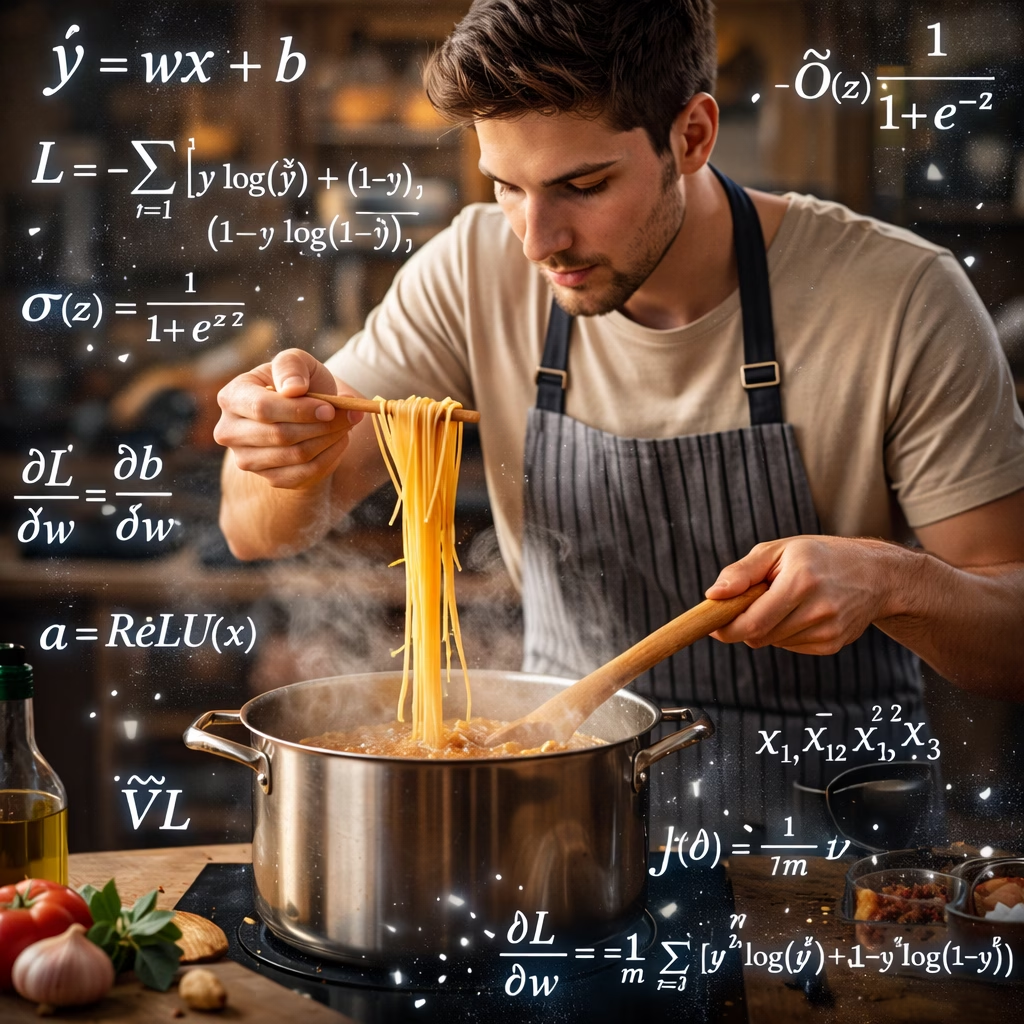

Deep learning concepts for beginners can feel overwhelming at first, often explained through complex equations and technical jargon. But at its core, deep learning is simply about learning through practice and feedback. In this article, we explore key deep learning concepts using a familiar analogy—someone learning how to cook pasta—making neural networks ( the backbone for Deep Learning) easier to understand without requiring a technical background.

The first time someone cooks pasta, it’s rarely perfect.

Too salty. Too bland. Overcooked. Undercooked.

But something important happens with every attempt: learning.

That’s exactly how a neural network learns.

A neural network isn’t magical. It doesn’t “think.”

It follows a process that looks surprisingly similar to a person learning how to cook—by preparing ingredients, following a recipe, tasting the result, and adjusting step by step.

Let’s walk through the core ideas of deep learning using a familiar kitchen analogy.

1. Data Preparedness: Preparing Ingredients Before Cooking

Before cooking begins, the kitchen must be ready. Ingredients can’t be scattered randomly around the counter.

In deep learning, this preparation phase is just as important as the learning itself.

Vectorization: Organizing Ingredients Properly

Imagine handing someone ingredients like this:

- flour somewhere on the table

- spices scattered across jars

- water in random containers

Cooking would be chaotic.

Instead, we prepare ingredients in a structured way:

- tomatoes → chopped

- onions → sliced

- spices → measured

- pasta → weighed

This is vectorization.

In neural networks, vectorization means converting messy, real-world information into a clean, structured numerical format that the model can actually work with.

Beginner intuition:

A model can’t learn from chaos. Structure comes first.

Normalization: Keeping Proportions Reasonable

Now imagine one ingredient is 5 kilograms while another is 2 grams. Even an experienced cook would struggle to balance flavors.

So we scale ingredients sensibly:

- salt → 1 teaspoon, not a cup

- pasta → 200 grams, not 5 kilograms

This is normalization.

Normalization keeps values within a comparable range so no single input overwhelms the rest. Balanced ingredients lead to stable learning—just like balanced data leads to stable training.

2. Parameters: Weights and Bias Are Cooking Decisions

Once ingredients are prepared, the cook starts making choices. In neural networks, these adjustable choices are called parameters.

Weights: How Much Each Ingredient Matters

Weights decide importance.

- more salt → saltier dish

- more cheese → creamier texture

- more chili → spicier flavor

Over time, the cook might learn:

“This pasta tastes better with more herbs and less salt.”

So the “weight” of herbs increases, and salt decreases.

In neural networks, weights control how strongly each input influences the final outcome.

Bias: The Chef’s Personal Touch

Even with perfect ingredient proportions, a dish sometimes needs a final tweak.

That last pinch of salt?

That’s bias.

Bias represents a small adjustment added at the end to improve balance. It’s the cook’s natural tendency or default style.

- Weights decide what matters most

- Bias fine-tunes the final result

3. Activation Functions: Taste Checks That Guide Decisions

A good cook doesn’t blindly follow steps. They taste along the way.

After mixing the sauce, the cook asks:

- “Does this taste right?”

- If yes → continue

- If no → adjust

That’s exactly what activation functions do.

They act as decision filters inside the network, allowing it to treat situations differently instead of responding linearly to everything.

Common intuition-friendly examples:

- ReLU: If flavor is positive, keep it; otherwise ignore it

- Sigmoid: Is this dish acceptable or not? (yes/no)

- Tanh: How good does this taste—from very bad to very good?

Without activation functions, learning would be flat and limited—like cooking without tasting.

4. Forward Propagation: Cooking the Dish

Now the cook follows the recipe:

- boil the pasta

- sauté onions

- add sauce and seasoning

- mix and serve

They use their current knowledge and technique to produce a dish.

In deep learning, this step is called forward propagation.

It simply means:

Using current parameters to produce an output.

Forward propagation = cooking with what you currently know.

5. Loss Function: How Far Is This from Delicious?

Now comes the moment of truth—the taste test.

- tastes awful → high loss

- almost perfect → low loss

- perfect dish → minimal loss

A loss function converts taste into a number.

It answers one key question:

“How far is this result from what we want?”

Loss is not criticism.

It’s feedback.

6. Backpropagation: Learning What Went Wrong

If the pasta is too salty, the solution is obvious: reduce salt next time

If it’s bland: increase spices

If it’s overcooked: reduce boiling time

The cook reflects on what caused the problem and adjusts the recipe.

This is backpropagation.

Backpropagation means tracing the error backward to understand which choices caused it, then updating the parameters accordingly.

7. Gradient Descent: Improving One Dish at a Time

Learning doesn’t happen in one attempt.

The cook repeats the process:

- cook

- taste

- adjust slightly

- try again

- improve

Not drastic changes. Just small, steady refinements.

That’s gradient descent.

Gradient descent is the art of improving gradually—step by step—until the dish consistently tastes right.

8. Parameters vs Hyperparameters: What Learns vs What You Decide

This distinction matters more than beginners realize.

Parameters: Learned Through Practice

These evolve automatically with experience:

- how much salt tastes good

- ideal boiling time

- sauce-to-pasta ratio

In neural networks, these are weights and bias.

Hyperparameters: Set Before Cooking

These guide the learning process but aren’t adjusted automatically:

- number of attempts

- cooking temperature

- portion size

- choice of olive oil vs butter

You decide them upfront. The cook doesn’t change them mid-learning.

In short:

Parameters are learned.

Hyperparameters are chosen.

Putting It All Together

| Neural Network Concept | Cooking Analogy |

|---|---|

| Vectorization | Ingredients chopped, measured, organized |

| Normalization | Ingredient proportions balanced |

| Weights | Importance of each ingredient |

| Bias | Chef’s final seasoning tweak |

| Activation Function | Taste checks during cooking |

| Forward Propagation | Cooking with current recipe |

| Loss Function | Taste rating of the dish |

| Backpropagation | Adjusting recipe based on mistakes |

| Gradient Descent | Practicing and improving over time |

Want to learn more about everyday use of AI?

Discover more from Debabrata Pruseth

Subscribe to get the latest posts sent to your email.