A Beginner-Friendly Guide to Privacy in AI

- 1. Why Do We Need Privacy in AI?

- 2. What Does “Privacy in AI” Actually Mean?

- 3. A Three-Layer Approach to Building Privacy Into AI

- 4. Predicting Bank Loan Approval With Differential Privacy

- 5. Exploring Parameter Sweeps in Differential Privacy

- 6. Introducing Federated Learning: The Final Privacy Layer

- 6. Conclusion: Building AI We Can Trust

When I speak to students or young professionals stepping into AI, I often tell them this: a model can be fast, fair and beautifully explainable — but if it isn’t private, it won’t be trusted.

In today’s world, privacy is not a “nice to have.” It is the foundation on which responsible AI is built. If customers fear their data might leak, be misused, or be revealed by accident, they will walk away, no matter how impressive your model may be.

In this guide, I want to give you a clear and beginner-friendly introduction to how we build privacy into AI, and why it matters. We will slowly move toward a hands-on, real-world project so you can see how all of this works in practice.

1. Why Do We Need Privacy in AI?

When I first learned about AI systems that touched personal information — bank records, health data, or even browsing history — I realised how fragile trust can be.

One mistake, one leak, or one careless design choice can cost an organisation not just money, but credibility.

Two forces make privacy essential:

Regulations : Laws such as GDPR, Singapore’s PDPA, and CCPA require companies to protect personal data. Failing to do so can lead to large penalties and forced shutdowns of AI systems.

Customer Trust : Even without regulation, people hesitate to interact with a model if they feel it might expose their details.

A clever hacker can even use an unprotected model to reverse-engineer someone’s identity — a technique called model inversion — and use it for fraud or social spoofing.

So privacy is not only a legal requirement; it is a promise we make to everyone whose data we use.

2. What Does “Privacy in AI” Actually Mean?

When I say a model is “private,” I mean that no one should be able to figure out personal details by interacting with it.

Even if someone knows almost everything about the training data except one person, they should still not be able to identify that individual.

This guarantee is measured using a value called privacy loss, written as ε (epsilon).

Here is the simplest way I explain it:

- Small epsilon → strong privacy

- Large epsilon → weaker privacy but better accuracy

To keep data safe, we add carefully calibrated noise during training. This protects individuals but can slightly reduce model accuracy.

The goal is to find the sweet spot where the model stays useful and private — something we will explore together in our practical project.

3. A Three-Layer Approach to Building Privacy Into AI

Most organisations today use a three-layer privacy architecture.

Think of it as three safety shields, each protecting the model at a different stage. I use this structure in my own projects and teach it to students because it is simple, scalable, and effective.

Layer 1: Operational Privacy – Keep the Model and Data In-House

The first layer is about where your data lives.

Instead of sending sensitive information across the internet, everything stays inside the organisation’s secure environment. This reduces exposure and limits who can access what.

Layer 2: Model-Level Privacy – Build Privacy Into the Training Process

This is where differential privacy comes in. We inject privacy guarantees during training using tools such as:

In the upcoming example, I’ll use diffpriv library to show you exactly how differential privacy is added to a model.

Layer 3: Federated Privacy – Let the Data Stay Where It Belongs

In this final layer, each department or branch keeps its own data and trains its own small model.

A central model only collects their weight updates, not the raw data.

This is known as Federated Learning, and it ensures that sensitive information never leaves local environments.

Together, these three layers create a strong, practical framework for building trustworthy AI systems.

In the next section, we will build a real model step-by-step: Predicting Bank Loan Approval With Differential Privacy. We’ll use real-world techniques, apply noise to protect data, and measure the privacy–accuracy trade-off. This will give you a hands-on understanding of how privacy works in everyday AI systems.

4. Predicting Bank Loan Approval With Differential Privacy

When people ask me how privacy changes the behaviour of an AI model, I usually give them this example. It’s simple, practical, and shows the full privacy–utility trade-off in action.

Here, our goal is to train two models that predict whether a bank will approve a loan. We use a public finance dataset with features like income, employment history, credit behaviour and more.

- Model 1: A standard, non-private logistic regression (our baseline).

- Model 2: A differentially private model using IBM’s diffprivlib.

Once trained, we compare their accuracy, precision, recall and F1-score across different levels of ε (epsilon) — the privacy budget.

I’ve shared the full code and outputs in my GitHub (including datasource used for training), but let me walk you through the important learnings.

GitHub repo : https://github.com/debabratapruseth/privacy-in-ai-loan-model

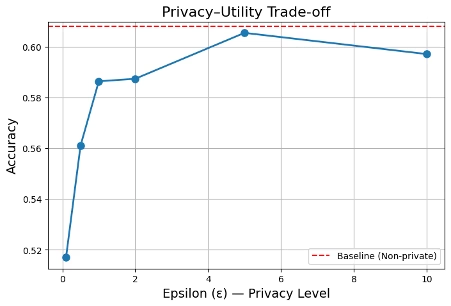

Watching Privacy and Accuracy Move Together

Imagine a bar graph with epsilon on the x-axis and model accuracy on the y-axis.

On the left, epsilon is tiny — meaning strong privacy. On the right, it’s large — meaning weaker privacy.

Here’s what the model showed ( Refer the code run uploaded in GitHub link shared earlier):

- Strong Privacy (ε = 0.1, 0.5): Lots of noise → low accuracy

- Moderate Privacy (ε = 1.0, 2.0): Balanced noise → good accuracy

- Weak Privacy (ε = 5.0, 10.0): Minimal noise → accuracy close to baseline

This is the classic privacy trade-off:

more noise = more privacy, but less predictive power.

The Results at a Glance

| ε (epsilon) | Accuracy | Precision | Recall | F1 |

| Baseline | 0.608 | 0.609 | 0.985 | 0.753 |

| 0.1 | 0.524 | 0.610 | 0.601 | 0.605 |

| 0.5 | 0.578 | 0.607 | 0.866 | 0.714 |

| 1.0 | 0.587 | 0.605 | 0.922 | 0.730 |

| 2.0 | 0.594 | 0.607 | 0.944 | 0.739 |

| 5.0 | 0.604 | 0.608 | 0.980 | 0.751 |

| 10.0 | 0.601 | 0.610 | 0.952 | 0.743 |

The baseline is the non-private model. Each row that follows shows how privacy changes the model’s behaviour. Now let’s unpack the main insights.

1. Very Strong Privacy (ε = 0.1) Hurts Performance

At this extreme setting:

- Accuracy drops to 0.524

- Recall falls sharply

- F1 — the balance of precision and recall — takes the biggest hit

Why this happens:

The privacy noise overwhelms the learning.

The model becomes cautious and unstable, missing many actual approvals.

2. Medium Privacy (ε = 0.5 to 1.0) Shows Big Improvement

Here, the noise is still strong but manageable.

- Accuracy rises to 0.578 → 0.587

- Recall climbs from 0.86 → 0.92

- F1 moves closer to baseline

My interpretation:

The model is finally “hearing” the patterns inside the dataset again.

This is typically the reliable zone in many applications.

3. Moderate Privacy (ε = 2.0) Hits the Best Balance

This is where everything aligns:

- Accuracy: 0.595 (very close to 0.608 baseline)

- Precision: stable

- Recall: 0.944 (excellent)

- F1: almost identical to the baseline

At ε = 2.0, the model keeps useful predictive power while still offering a meaningful privacy guarantee.

4. Weak Privacy (ε = 5 and 10) Becomes “Privacy-Light”

At this point, the model behaves almost exactly like the baseline.

- Accuracy ≈ 0.60

- F1 ≈ 0.74–0.75

The privacy protection becomes very thin. Good for accuracy, weak for safety.

The Sweet Spot: ε ≈ 2.0

To me, ε ≈ 2.0 is ideal for this dataset. It’s the point where:

- Accuracy stays high

- Recall stabilises

- F1 remains strong

- And the privacy guarantee still has real value

At very low epsilons, the model suffers.

At very high epsilons, privacy becomes symbolic.

At ε = 2.0, we land in the middle — a safe and effective privacy budget.

5. Exploring Parameter Sweeps in Differential Privacy

Now that we’ve seen how differential privacy affects a model, I want to take you one level deeper.

In real AI work, privacy isn’t controlled by epsilon alone. The behaviour of a differentially private model is shaped by a combination of hyperparameters — and the best way to understand their interaction is through a parameter sweep.

A parameter sweep simply means running the same model many times while changing key settings. This helps us see which combinations of settings give the best accuracy while still protecting privacy.

For this experiment, I swept across three important knobs:

- ε (epsilon): The privacy-strength parameter

- data_norm: The maximum L2 norm used to clip feature vectors

- C: The regularization term that controls model complexity

By combining these three, we can observe how privacy, stability and utility move together.

A Quick Primer: What These Hyperparameters Mean

Before we dive into the results, let me break down the two hyperparameters that students often find confusing.

data_norm — How much we clip the data

Differential privacy requires control over individual influence.

So before training, each data point is clipped to a maximum allowed “size,” defined by data_norm.

- A low data_norm means aggressive clipping → the model loses information

- A high data_norm means weak clipping → privacy weakens

Getting this balance right is essential.

C — The regularization strength

If you’ve used logistic regression before, you know C is the inverse of regularization.

- Low C → stronger regularization → simpler, more stable model

- High C → weak regularization → can overfit, especially under DP noise

Under differential privacy, a stable model generally behaves better. That’s why C becomes a critical part of the privacy equation.

Running 60 Experiments — and the Top 10 Results

I ran 60 combinations of epsilon, data_norm and C ( Refer the code run uploaded in GitHub link shared earlier).

Here are the top-performing 10 results ranked by accuracy and F1-score:

| idx | epsilon | data_norm | C | accuracy | f1 |

| 47 | 5.0 | 10.0 | 10.0 | 0.607320 | 0.753681 |

| 40 | 5.0 | 3.0 | 1.0 | 0.605729 | 0.741050 |

| 45 | 5.0 | 10.0 | 0.1 | 0.605530 | 0.751410 |

| 57 | 10.0 | 10.0 | 0.1 | 0.605132 | 0.750283 |

| 58 | 10.0 | 10.0 | 1.0 | 0.605132 | 0.750722 |

| 46 | 5.0 | 10.0 | 1.0 | 0.604734 | 0.751345 |

| 32 | 2.0 | 5.0 | 10.0 | 0.603939 | 0.749528 |

| 44 | 5.0 | 5.0 | 10.0 | 0.603740 | 0.745073 |

| 55 | 10.0 | 5.0 | 1.0 | 0.603541 | 0.746470 |

| 52 | 10.0 | 3.0 | 1.0 | 0.603143 | 0.738498 |

These results reinforce something I see often in privacy engineering: good privacy settings are never decided by epsilon alone.

They emerge from how epsilon interacts with clipping and regularization.

How to Interpret the Trends

1. The Role of Epsilon

- Low ε → strong privacy → high noise → lower accuracy

- High ε → weaker privacy → accuracy improves

- Beyond ε ≈ 5–10, accuracy stops improving — we hit diminishing returns

This mirrors what we saw earlier in the loan-approval example. Privacy has a curve, not a straight line.

2. The Role of data_norm

data_norm determines how much information each data point is allowed to contribute.

- Too low (e.g., 1.0) → excessive clipping → the model loses structure

- Too high (e.g., 15+ ) → privacy weakens

- Sweet spot: 3–5 — enough signal without violating privacy

This is consistent with standard DP theory.

3. The Role of C

Regularization plays a quiet but crucial role in stabilising DP models.

- Lower C (0.1 or 1.0)

- stronger regularization

- model becomes more robust against DP noise

- Higher C (10.0)

- Differential Privacy noise amplifies instability

- Performance may drop unless epsilon is high

In many differentially private systems, I prefer starting with C = 1.0 and only increasing if accuracy remains too low.

What We Learned From the Sweep

Across all 60 runs, the same theme appeared:

A moderate epsilon (2–5), moderate data_norm (3–5), and a stable C (0.1–1.0) consistently give the best privacy–utility trade-off.

This confirms a broader lesson I share with new AI engineers:

Differential privacy is not one setting — it’s a configuration space.

To find the right privacy level, you must tune the whole ecosystem, not just epsilon.

This parameter sweep helps us understand how these ingredients work together when we build real-world, private AI systems.

6. Introducing Federated Learning: The Final Privacy Layer

By now, we’ve placed privacy inside the model. But what if the data itself cannot move?

Imagine a multinational bank. Different branches operate in different countries, each with its own infrastructure and its own laws. Data cannot cross borders freely. Yet we still want a global loan-approval model.

This is where Federated Learning (FL) becomes powerful.

How Federated Learning Works

In FL, the data stays exactly where it is — inside the local branch, hospital, department, or device. Nothing is uploaded to the central server.

Here’s the workflow:

- Each client trains a small local model on its own data.

- Only the model weights (not the data) are shared with the central server.

- The server aggregates all updates using a method like FedAvg.

- The new global model’s global weights is sent back to each client.

- The cycle repeats.

No raw data moves. Only learned patterns travel. Here’s a simple sketch of the process:

Even without encryption or differential privacy, FL already protects individuals because:

- Raw data never leaves the device

- The server only sees weight updates

- Under normal assumptions, the server cannot reconstruct personal records

- FL can be combined with differential privacy, giving two layers of protection

Try it out

I will share the code in a separate blog. But this is what you can try it out yourself : Simulating Federated Learning using three to five “virtual banks.” You can use tools like Tensorflow Federated, Flower etc. for this exercise.

Each bank:

- Holds its own part of the loan dataset

- Trains a private local model

- Sends only weight updates to the central model

- Never shares any personal data

Then show:

- The server log — receiving only weights

- Each client’s local data stays isolated

- No central dataset exists

- The global model becomes stronger with each communication round

- Optional: each local model can add DP noise before sending updates

By the end of the demo, you can clearly see that federated learning allows collaboration without sharing data, completing our three-tier privacy architecture.

6. Conclusion: Building AI We Can Trust

When I first stepped into the world of machine learning, I was fascinated by what the models could do. But the more I worked with real-world data — health records, banking information, school admissions — the more I realised that AI doesn’t live in code alone. It lives in people’s lives.

And because of that, trust becomes the true currency of AI.

From differential privacy to federated learning, we saw how personal data can stay protected even while we learn from it.

A private AI model respects the boundaries of the people it serves.

If someone cannot trust you with their data, they will not trust your AI — no matter how accurate it is.

We studied the full stack:

- Operational privacy — keeping data in secure environments

- Differential privacy — adding mathematical protection

- Federated learning — allowing collaboration without centralising data

This is not academic theory. This is how modern banks, hospitals and global companies build responsible AI today.

If you understand these three layers, you’re already ahead of most beginners entering AI.

“Anyone can train a model. Not everyone can build a model the world can trust”

Want to learn more about everyday use of AI?

Discover more from Debabrata Pruseth

Subscribe to get the latest posts sent to your email.